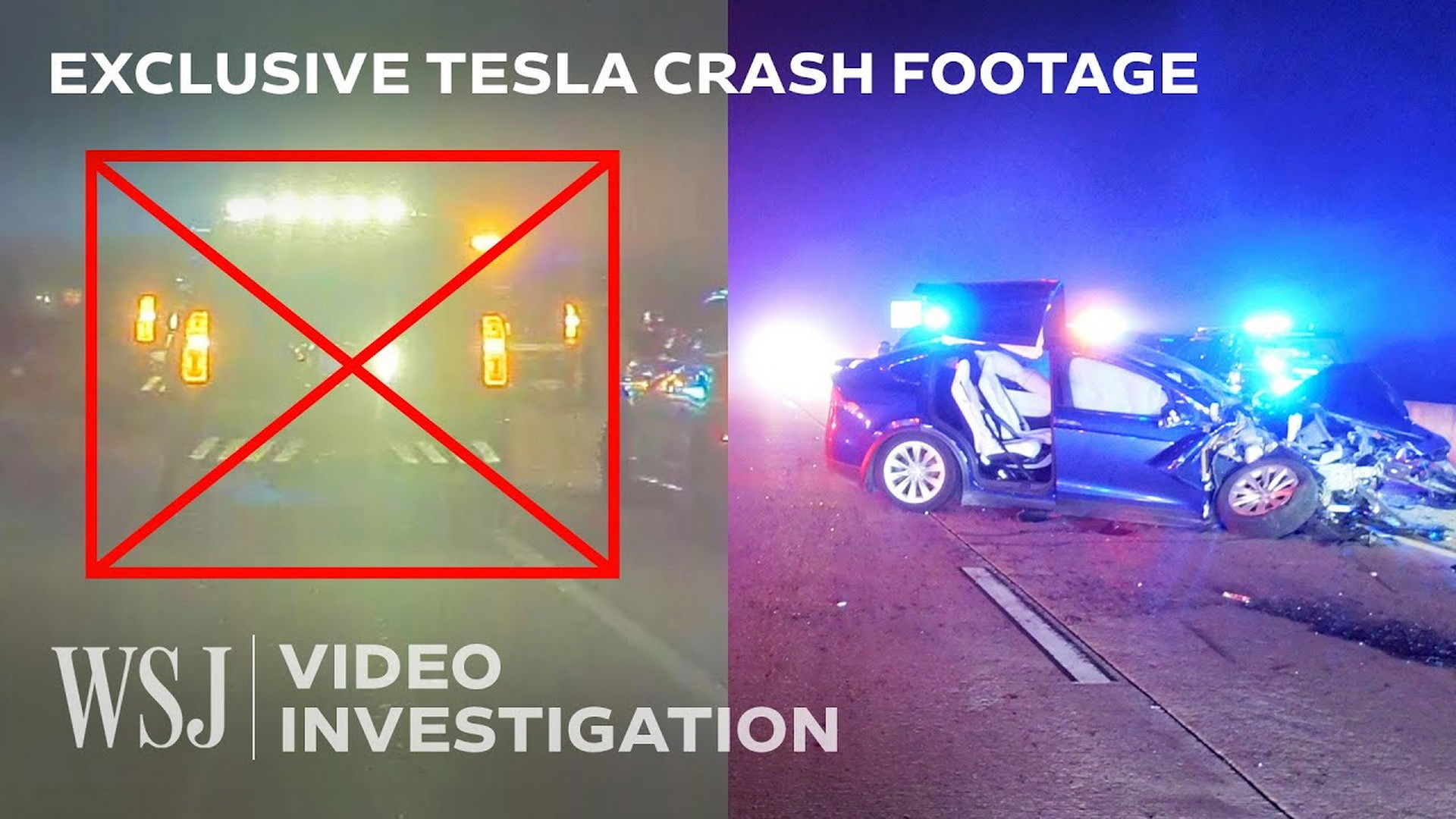

New Footage Shows Tesla On Autopilot Crashing Into Police Car After Alerting Driver 150 Times::Six officers who were injured in the crash are suing Tesla despite the fact that the driver was allegedly impaired

I have a lot of trouble understanding how the NTSB (or whoever’s ostensibly in charge of vetting tech like this) is allowing these not-quite self driving cars on the road. The technology doesn’t seem mature enough to be safe yet, and as far as I can tell, nobody seems to have the authority or be willing to use that authority to make manufacturers step back until they can prove their systems can be integrated safely into traffic.

It’s just ADAS - essentially fancy cruise control. There are a number of autonomous vehicle companies who are carefully and successfully developing real self-driving technology, and Tesla should be censured and forbidden for labeling their assistance software as “full self-driving.” It’s damaging the real industry.

It’s not “not-quite-self-driving” though, it’s literal garbage. It’s cruise control, lane assist and brake assist. The robot vision in use is horrible.

There are Tesla engineers bad mouthing the system openly.

Musk is a scammer and they need to issue an apology for all of the claims around autopilot, probably pay a great deal of money, and then change the name and advertising around it.

Oh, and also this guy should never drive again.

$$$ that’s how.

This is stupid. Teslas can park themselves, they’re not just on rails. It should be pulling over and putting the flashers on if a driver is unresponsive.

That being said, the driver knew this behavior, acted with wanton disregard for safe driving practices, and so the incident is the driver’s fault and they should be held responsible for their actions. It’s not the courts job to legislate.

It’s actually the NTSB’s job to regulate car safety so if they don’t already have it congress needs to grant them the authority to regulate what AI behavior is acceptable/define safeguards against misbehaving AI.

There’s no way the headline is true. Zero percent. The car will literally do exactly what you stated if it goes too long without driver engagement and I’ve experienced it first hand.

Evidently, he was aware enough to respond to the alerts, per the logs (as stated in the WSJ video that’s in the article). It shows a good bit of the footage, too.

Seems like they need something better for awareness checking than just gripping the wheel and checking where your eyes are pointed. And obviously better sensors for object recognition.

The headline doesn’t state that the warnings were consecutive.

Perhaps the driver was just aware enough to keep squelching warnings and prevent the car from stopping altogether?

I’ll grant you, though, 150 warnings is still a little tough tough to believe…

The driver is responsible for this accident, Tesla still should be liable imo for all the shady and outright misleading advertising around their so called “self driving”. Compare Tesla’s marketing to like GMs of Hyundai’s, both of which essentially have parity with Teslas system in terms of actual features, and you’ll see a big difference

Sounds like the injured officers are suing. It’s a civil case not criminal, so I’m not sure how much the court would actually be asked to legislate. I’d be interested to hear their arguments, though I’m sure part of their reasoning for suing Tesla over the driver is they have more money.

It should be pulling over and putting the flashers on if a driver is unresponsive.

Yes. Actually, just stopping in the middle of the road with hazard lights would be sufficient.

Removed by mod

150 more warnings than a regular car would give, ultimately it’s the driver’s fault.

If the driver was unresponsive in a normal car, it would stop.

The driver was responding. If he didn’t respond the car would have stopped.

If this was a normal car he probably would have just crashed earlier.

TIL cruise control doesn’t exist

Setting aside the driver issue, isn’t this another case that could’ve been prevented with LIDAR?

You know what might work, program the car so that after the second unanswered “alert” the autopilot pulls the car over, or reduces speed and turns on the hazards. The third violation of this auto pilot is disabled for that car for a period of time.

I drive a Ford Maverick that is equipped with adaptive cruise control, and if I were to get 3 “keep your hands on the wheel” notifications, it deactivates adaptive cruise until the vehicle is completely turned off and on again. It blew my mind to learn that Tesla doesn’t do something similar.

This is literally exactly how it works already. The driver must have been pulling on the steering wheel right before it gave him a strike. The system will warn you to pay attention for a few seconds before shutting down. Here’s a video: https://youtu.be/oBIKikBmdN8

Ah, so its just people defeating the system

The system with cars is that you don’t distract the driver from driving, having a system that takes over driving is exactly that, so the idea of the system is flawed to begin with.

I have to say this is extremely inaccurate imo. Self driving takes over the menial tasks of keeping the car in the lane, watching the speed, etc. and allows an attentive driver to focus on more high level tasks like looking at the road ahead, watching the sides of the road for potential hazards, and keeping more aware of their blind spots.

Just because the feature can be abused does not inherently make it unsafe. A drunk driver can use cruise control to more accurately control the vehicle’s speed and avoid a ticket, does that make it a bad feature? I wouldn’t say so.

Autopilot and other driver assist systems are good when used responsibly and cautiously. It’s frustrating to see people cause an accident after misusing the system and blame the technology instead. This is why we can’t have nice things.

It’s frustrating to see

This is why we can’t have nice things

It is also frustrating to see people whining for technology when they should rather think about dead policemen and rescuers.

You should get your priorities straight if you ever hope to be taken seriously

The system will warn you to pay attention

… and if we have learned anything from that incident, it is that the warnings have been worthless.

The system can be tricked even by the worst drunkards! 150 times in a row.

for a few seconds before shutting down.

Few seconds are not enough. The crash was already unavoidable.

You’re misinterpreting what I said and conflating two separate scenarios in your 2nd statement. I didn’t say anything about the system warning “for a few seconds before shutting down” in the event of an eminent collision. It warns the driver before shutting down if the driver fails to hold the steering wheel during normal driving conditions.

The warnings were worthless because the driver kept responding to them just before they timed out and shut autopilot down. It would be even worse if the car immediately pulled off the road and stopped in traffic without warning the driver first.

They aren’t subtle either, after failing to touch the wheel for about 5-10 seconds it starts beeping loudly and flashing an icon on the screen.

This is not a case of autopilot causing an accident, this is a case of an impaired driver operating a vehicle when they should not have been. If the driver was using standard cruise control, would we be blaming the vehicle because their foot wasn’t touching the accelerator when the accident happened? No, we wouldn’t.

This is not a case of autopilot causing an accident, this is a case of an impaired driver

It is both, of course. The drunkard and the autopilot, both have added their share to create such danger, that ended deadly.

Driving drunk is already forbidden.

What Tesla has brought on the road here should be forbidden as well: lane assist combined with adaptive cruise control AND such a bunch of blind sensors.

The driver was in autopilot. Auto pilot is cruise control and lane assist. It’s not FSD. Tesla didnt bring that " to the road ". The driver was drunk, and with most auto pilot or FSD accidents…its user error.

Still unaware of a proven FSD accident.

They didn’t say he didn’t respond to the alerts. If you don’t respond, autopilot turns off.

Poor

drunkimpaired driver falling victim to autonomous driving… Hopefully that driver lost their license.That doesn’t drive the problem of autopilot not taking the right choices. What is the driver wasn’t drunk, but they had a heart attack? What if someone put a roofie on their drink? What if the driver was diabetic or hypoglycemic and suffered a blood glucose fall? What if they had a stroke?

Furthermore, what if the driver got drunk BECAUSE the car’s AI was advertised as being able to drive for you? Think of false publicity.

If your AI can’t handle one simple case of a driver being unresponsive, that’s negligence on the company’s part.

This source keeps pushing tesla propaganda. There’s always an angle trying to sell that it wasn’t the tesla’s fault

Officers injured at the scene are blaming and suing Tesla over the incident.

…

And the reality is that any vehicle on cruise control with an impaired driver behind the wheel would’ve likely hit the police car at a higher speed. Autopilot might be maligned for its name but drivers are ultimately responsible for the way they choose to pilot any car, including a Tesla.

I hope those officers got one of those “you don’t pay if we don’t win” lawyers. The responsibility ultimately resides with the driver and I’m not seeing them getting any money from Tesla.

Well, in the end it’s up to whether Tesla’s ADAS is compliant with laws and regulations. If there really were 150 warnings by the ADAS without it disengaging, this might be an indicator of faulty software and therefore Tesla being at least partially at fault. It goes without saying that the driver is mostly to blame but an ADAS shouldn’t just keep on driving when it senses that the driver is incapacitated.

Also from the article:

Data from the Autopilot system shows that it recognized the stopped car 37 yards or 2.5 seconds before the crash. Autopilot also slows the car down before disengaging altogether.

Hard to argue Tesla at fault when clearly the driver was impaired and at fault here.

It’s also so misleading that Tesla use the word Autopilot for what is basically adaptive cruise control and lane assist

I think Mercedes is the only car company that will accept blame for a self-driving or self-parking failure. That should tell you something.

So few people will pay the value add-on that they may not need to.

It’s what you get when you design places that require cars for everything

So self driving cars, are not so self driving… Huh, whodathunk it lol /s

I hope the cops win. Autopilot allows for a driver to completely disengage their attention from the car in a way that’s not possible with just cruise control.

There’s no way you can drop a human in a life threatening critical situation with 2.5 seconds to make a decision and expect them to make reasonable decisions. Even stone cold sober, that’s a lot to ask of a person when the car makes a critical mistake like this.

On cruise, the driver would still have to be aware that they were driving. With auto pilot, the driver had likely passed out and the car carried on it merry way.

Because people can’t pass out with just cruise control? He didn’t have 2.5 seconds. According to the article he had 45 minutes of multiple warnings.