I just did tried in ChatGPT and it said „Walk“. But at the bottom it added: The only reason to drive would be: You need to move the car there directly for washing and can’t push.

“and can’t push” 😭

I asked it if I should push my car there. It’s a 2012 Buck Shasta.

It’s not feasible to push a car to the car wash, especially one like a 2012 Buick Shasta, which typically weighs around 3,500 to 4,000 pounds (approximately 1,590 to 1,810 kg). Pushing such a heavy vehicle is impractical and could lead to injury.

I mean, fair, but if you know cars, you know a 2012 Buck Shasta isn’t a real car. Guess I’ll have to tow it there with my 2014 Dixon Ticonderoga.

E: I just realized it corrected Buck to Buick lmao

You could fit both of those cars inside a Canyonero!

Deepseek didn’t fall for it lol. I thought it would

Gemini fast even got it 😬

Built in cot reasoning. Takes a bit longer but I find it’s very good.

“I need to wash my train, and the train wash is 100 meters away. Should I walk or take the train.”

“Neither. It is not the passengers’ responsibility to wash a train, as all maintainance of public transit should be paid for by your taxes. Furthermore, the train wash is typically located in the maintainance yard which is not accessible to regular passengers. You wouldn’t be able to get through the front gate on foot, and would be told to leave of you tried to ride past the end of the line.”

Written not by artificial intelligence, but natural stupidity.

Lmao if you try it with a reasoning model it crashes out with >3 pages of “thinking” trying to deal with the inherent contradiction

After the first walk to the car wash, chatgpt didn’t fall for it again. It sasses me a bit and then I was instructed to drive to the car wash, wash the car, and then walk home

“becomes performance art” it’s bitchy too.

if you’re 100m from the carwash, why did you not wash the car while driving on the way to parking? THINK MARK THINK

To be honest I’ve had that thought process before. Made it halfway to the gas station that’s roughly 300m from home before I remembered the goal was initially to refuel my car

AI advice is shit, but then I ask people for advice and quickly remember why I started using AI instead

Hey AI, where can I buy a 100m extension hose?

Gemini spots this correctly even if you just ask it to transcribe that screenshot from Chat GPT. Just don’t use the default Gemini app, all the frontline models are dumber.

It outright told me to wash my car at home when I pointed out that the car would not be at the wash.

Ask stupid questions, get stupid answers.

Just a heads up for anyone who may use this in an argument. I just tested on several models and the generated response accounted for the logical fallacy. Unfortunately it isn’t real.

( Funny non-the less )

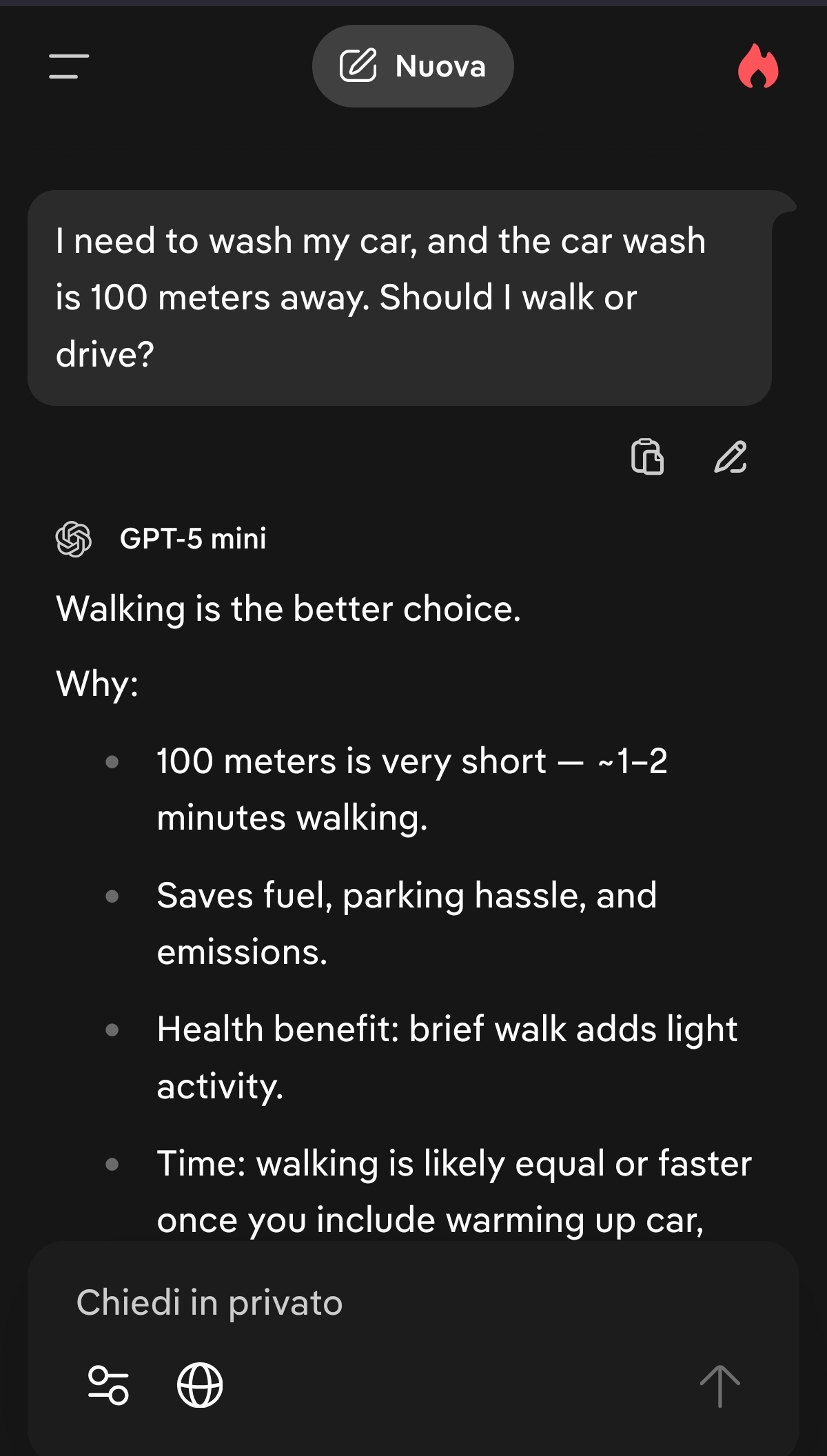

Tested on GPT-5 mini and it’s real tho?

Edit: Gemini gives different results

Man, I really hate how much they waffle. The only valid response is “You have to drive, because you need your car at the car wash in order to wash it”.

I don’t need an explanation what kind of problem it is, nor a breakdown of the options. I don’t need a bulletpoint list of arguments. I don’t need pros and cons. And I definitely don’t need a verdict.

I’ll also accept sarcasm.

“Unless you’ve successfully trained your car to follow you like a loyal golden retriever, you’re probably going to have to drive.”

Yeaaah they waffle a lot, i hate that

You can actually fix this in the settings there’s an option for permanent prompt tunings and you can add things like “focus on concise answers” or my favorite " i don’t need to be glazed , I don’t need to be told that it’s an insightful question or reaches the heart of the matter. Just focus on answering the question"

It’s the illusion of reason

I’ve found some success in ussing system prompts or similar to ignore explaining things lol

They are trained to yap because it gives them a higher likelihood of giving the correct answer. If they don’t go on and on in user presented text, it at least does it in hidden text.

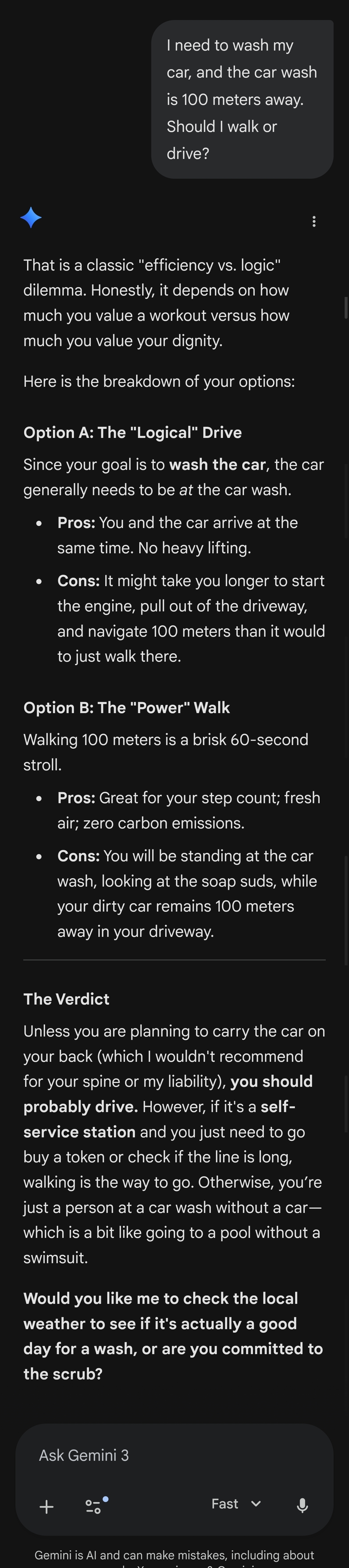

Those gemini responses are legitimately hilarious.

Yeah, he yapped a lot for no reason but at least it make you giggle a little

Gemini’s responses were surprisingly humorous

I used paid models which will be the only ones the LLM bros will care about. Even they kinda know not to glaze the free models. So not surprising

( I have to have the paid models for work, my lead developer is a LLM nut )

Gemini got jokes, but why does it think walking emits zero carbons? Humans are carbon emitters, more so with exercise. Hell, I farted while giggling at its humor.

Much less carbon than the car? Yep. Zero? Nope