cross-posted from: https://lemmy.ca/post/61948688

Excerpt:

“Even within the coding, it’s not working well,” said Smiley. “I’ll give you an example. Code can look right and pass the unit tests and still be wrong. The way you measure that is typically in benchmark tests. So a lot of these companies haven’t engaged in a proper feedback loop to see what the impact of AI coding is on the outcomes they care about. Lines of code, number of [pull requests], these are liabilities. These are not measures of engineering excellence.”

Measures of engineering excellence, said Smiley, include metrics like deployment frequency, lead time to production, change failure rate, mean time to restore, and incident severity. And we need a new set of metrics, he insists, to measure how AI affects engineering performance.

“We don’t know what those are yet,” he said.

One metric that might be helpful, he said, is measuring tokens burned to get to an approved pull request – a formally accepted change in software. That’s the kind of thing that needs to be assessed to determine whether AI helps an organization’s engineering practice.

To underscore the consequences of not having that kind of data, Smiley pointed to a recent attempt to rewrite SQLite in Rust using AI.

“It passed all the unit tests, the shape of the code looks right,” he said. It’s 3.7x more lines of code that performs 2,000 times worse than the actual SQLite. Two thousand times worse for a database is a non-viable product. It’s a dumpster fire. Throw it away. All that money you spent on it is worthless."

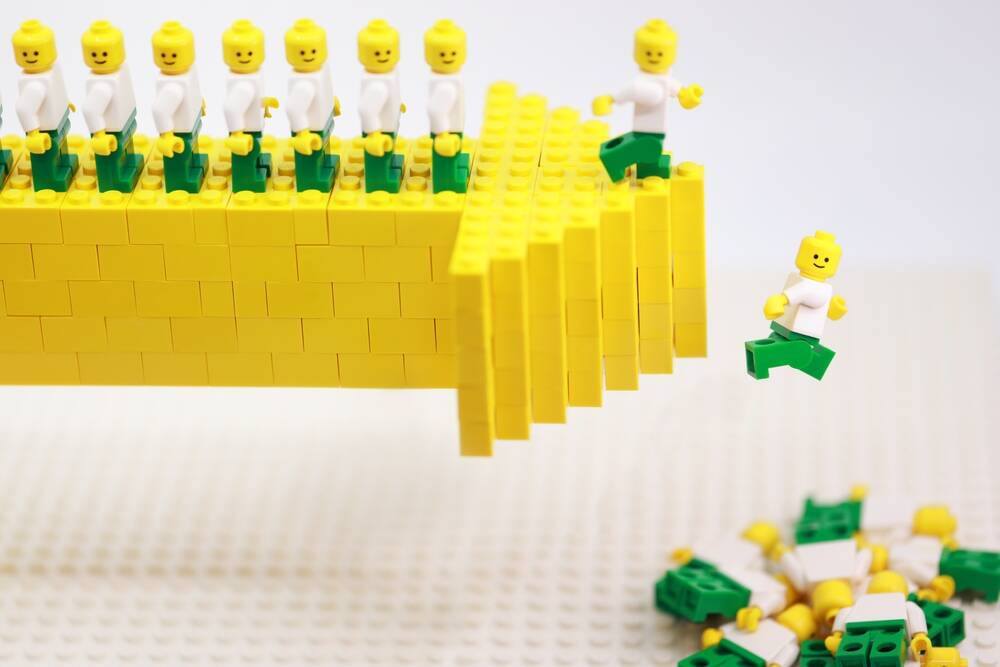

All the optimism about using AI for coding, Smiley argues, comes from measuring the wrong things.

“Coding works if you measure lines of code and pull requests,” he said. “Coding does not work if you measure quality and team performance. There’s no evidence to suggest that that’s moving in a positive direction.”

Being in an economic bubble during the age of (over)information is really weird. We’re getting two articles per day confirming that we’re in a big ass bubble but it just keeps on going. I preferred not really knowing how bad things are.

Everybody knew dot-coms in 2000 and houses in 2007 were bubbles, too. But they kept investing anyway, because they didn’t know when it would pop and FOMO is a helluva drug.

Also, something to keep in mind: https://awealthofcommonsense.com/2014/02/worlds-worst-market-timer/

AI works great. I work in the sphere of production defect detection in manufacturing and it’s been working pretty well for a decade or more to predict machine failures and spot defective materials or products.

LLMs as business digital yes man is what doesn’t work.

Yeah, unfortunately the marketing people have made the LLM synonymous to AI. It’s a damn shame.

LLMs have a use case, it’s just really limited and the vast majority of what companies, and people broadly, use it for is either not the best case for it or not even something it can/should do.

If you know how to use them they can save time. You still need to validate everything it gives you, but as a developer I can use one to generate small code snippets or give it documentation and ask questions as a quick reference.

But these are not automation tools. They are not worker replacements. and they aren’t replacements for research even if they can get you started on research…

LLMs, and neural nets in general, can never be AGI no matter how much companies wish it could be.

It’s 3.7x more lines of code that performs 2,000 times worse than the actual SQLite.

Pretty much my experience with LLM coding agents. They’ll write a bunch of stuff, and come with all kinds of arguments about why what they’re doing is in fact optimal and perfect. If you know what you’re doing, you’ll quickly find a bunch of over-complicating things and just plain pitfalls. I’ve never been able to understand the people that claim LLMs can build entire projects (the people that say stuff like “I never write my own code anymore”), since I’ve always found it to be pretty trash at anything beyond trivial tasks.

Of course, it makes sense that it’ll elaborate endlessly about how perfect its solution is, because it’s a glorified auto-complete, and there’s plenty of training data with people explaining why “solution X is better”.

This article says that the AI-coded Rust rewrite of SQLite ran 2,000 times slower, but the linked source article says it ran more than 20,000 times slower. Muddling up 2,000 and 20,000 seems a bit sloppy for journalism about code performance.

The article cited by The Register cites this more detailed analysis in turn: https://blog.katanaquant.com/p/your-llm-doesnt-write-correct-code

Performance of the AI-generated version was 20,000 times slower on one specific benchmark, but “only” about 2,000 times slower when averaging over multiple different benchmarks (which is, imo, a better measurement of the code’s quality).

So I suppose The Register pulled from multiple sources (as you should) and just linked to the most top-level of all of them.

Thanks for that link. It has a lot more detail.

Companies and governments told us that an energy transition is “too costly” or “too disruptive to society” but when it comes to AI disrupting and even ending people’s lives…

They just say, “deal with it.”

No bro the new model from 3 months ago is infinite gooder than what they tested. In 12-18 months we get agi or some shit i dunno just 10 more billions$ bro.

Removed by mod