- cross-posted to:

- science@mander.xyz

- cross-posted to:

- science@mander.xyz

nowhere in the article do they say that they could verify what the LMM(sic!) actually came up with or how plausible it was.

It’s not hard for any genAI to come up with some “solution”. it actually being correct is the hard part

Mm, I agree. It’s not that interesting until someone has verified it.

That’s where the human comes in though. The value of genAI is that it can generate outputs that can trigger ideas in your head which you can then go and evaluate. A lot of the time the trick is in finding the right thread to pull on. That’s why it’s often helpful to talk through a problem with somebody or to start writing things down. The process of going through the steps often triggers a memory or lets you build a connection with another concept. LLMs serve a similar role where they can stimulate a particular thought or memory that you can then apply to the problem you’re solving.

I love that you just blew past what the commenter was talking about to preach the AI gospel. It’s fitting with the subject matter.

I’m not sure what you’re claiming I blew past here. I simply pointed out that nobody is expecting LLMs to validate the solutions it comes up with on its own, or to trust it to come up with a correct solution independently. Ironic that you’re the one who actually decided to blow past what I wrote to make a personal attack.

And that’s not what the commenter was talking about. He wasn’t expecting anything else from the LLM. He wanted to see the actual proof that any of this happened, and that it was verified by a human. All the article said was this happened and it worked. If that’s true what were the results and how were they verified?

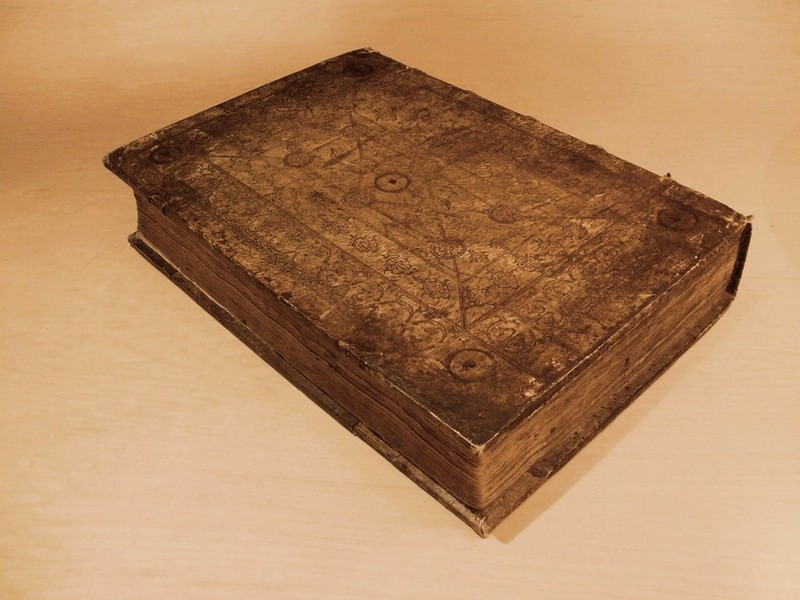

In other words, you’re saying neither of you could be arsed to click through to the actual discussion on the project page before making vapid comments? https://blog.gdeltproject.org/gemini-as-indiana-jones-how-gemini-3-0-deciphered-the-mystery-of-a-nuremberg-chronicle-leafs-500-year-old-roundels/

Again you didn’t answer the question. This is just the prompt and the answer. Where is the proof of the truth claim? Where is the actual human saying “I’m an expert in this field and this is how I know it’s true.” Just because it has a good explanation for how it did the translation doesn’t mean the translation is correct. If I missed it somewhere in this wall of text feel free to point me to the quote, but that is just an AI paste bin to me.

Nobody was claiming a proof, that’s just the straw man the two of you have been using. What the article and the original post from researchers says is that it helped them come up with a plausible explanation. Maybe actually try to engage with the content you’re discussing?